Artificial Evaluation vs Real Performance Observation

Understanding the Critical Difference in Modern Decision Making

Introduction

In many modern systems, especially those involving education, recruitment, artificial intelligence, and performance management, evaluation is often conducted through artificial or simulated methods. These include tests, benchmarks, metrics, scores, and predefined scenarios. In contrast, real performance observation focuses on how individuals or systems behave in actual environments under real constraints. Although both approaches aim to measure capability, they are fundamentally different in what they capture, what they miss, and how reliable their conclusions are.

This distinction is not theoretical. It directly affects hiring decisions, product design, AI deployment, organizational efficiency, and long term trust in systems.

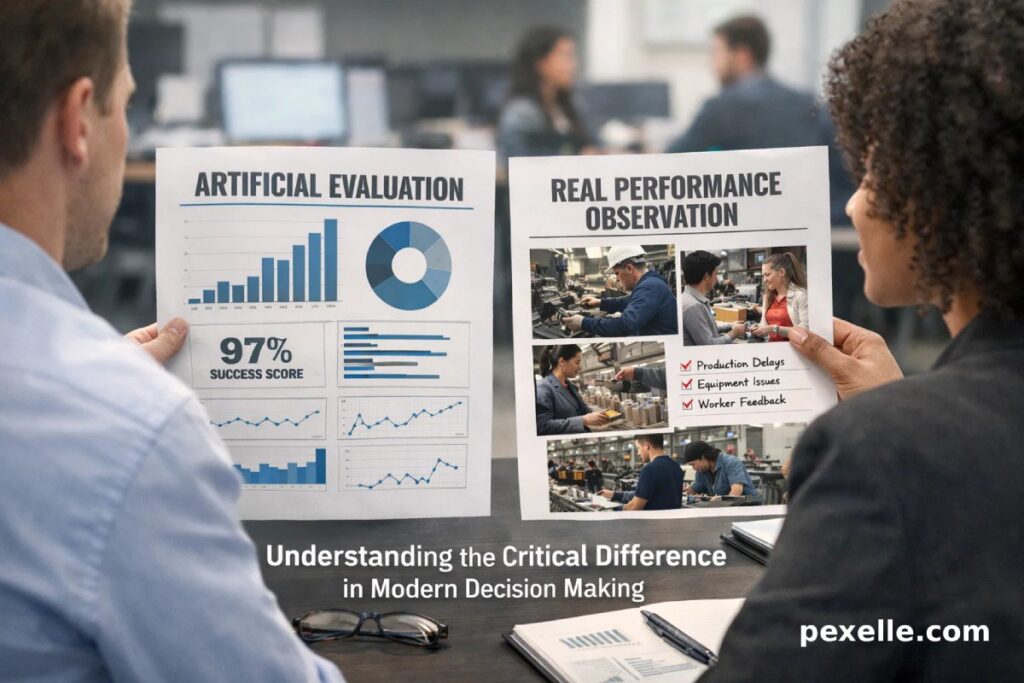

What Is Artificial Evaluation

Artificial evaluation refers to assessment methods designed in controlled or simulated environments. Examples include standardized tests, coding challenges, model benchmarks, KPIs, and lab based experiments. These systems are typically structured, repeatable, and optimized for comparability.

Their main strengths are scalability and consistency. Artificial evaluations make it possible to compare thousands of candidates or systems using the same criteria. They also reduce noise by eliminating environmental variables. However, this control comes at a cost.

Artificial evaluations tend to measure how well someone performs within the rules of the test, not how well they perform in reality. Over time, subjects learn to optimize for the evaluation itself rather than the underlying skill it is supposed to represent.

What Is Real Performance Observation

Real performance observation evaluates behavior in real conditions. This includes on the job performance, real world problem solving, collaboration under pressure, adaptation to change, and decision making with incomplete information.

Unlike artificial evaluation, real observation is contextual. It captures trade offs, constraints, human factors, and unexpected events. It reveals not only what someone can do, but how they do it and why.

The downside is that real observation is harder to scale, harder to standardize, and more expensive. It also requires time, trust, and often qualitative judgment rather than simple scoring.

Key Differences Between the Two Approaches

| Dimension | Artificial Evaluation | Real Performance Observation |

|---|---|---|

| Environment | Controlled and simulated | Uncontrolled and real |

| Scalability | High | Low to medium |

| Context awareness | Minimal | High |

| Resistance to gaming | Low | High |

| Signal depth | Narrow | Deep |

| Long term predictability | Often weak | Stronger |

Artificial evaluation answers the question: can this entity perform well under designed conditions?

Real observation answers the question: can this entity create value in reality?

Why Artificial Evaluation Often Fails

Artificial systems fail when the evaluation becomes the goal. This phenomenon is known across economics, AI, and organizational theory. When a metric becomes a target, it stops being a good metric.

People and systems adapt. Test takers learn test strategies. AI models overfit benchmarks. Employees optimize KPIs rather than outcomes. As a result, high scores no longer correlate with real effectiveness.

Another limitation is abstraction. Real world performance involves ambiguity, social interaction, ethical judgment, and evolving constraints. These factors are extremely difficult to encode into artificial tests without oversimplifying them.

Why Real Observation Is More Trustworthy

Real performance observation captures causality instead of correlation. It shows how results are produced, not just that they exist. This makes it harder to fake and easier to trust.

It also reveals second order effects such as resilience, learning speed, communication quality, and ethical behavior. These dimensions are often invisible in artificial evaluations but critical in real systems.

For AI systems in particular, real world observation is essential to detect failure modes that benchmarks never reveal, such as bias under deployment conditions or degradation over time.

The Role of Hybrid Evaluation Models

The most effective systems do not choose between artificial evaluation and real observation. They combine both.

Artificial evaluation is useful for initial filtering, baseline comparison, and regression testing. Real performance observation is essential for validation, trust building, and long term decision making.

A strong hybrid model uses artificial evaluation as an entry point, then continuously updates judgments based on real world evidence. In such systems, real performance can correct or even override artificial scores.

Implications for Organizations and Technology

Organizations that rely solely on artificial evaluation risk optimizing for appearances rather than outcomes. This leads to poor hires, fragile systems, and misaligned incentives.

Conversely, organizations that integrate real performance observation gain a more accurate understanding of capability, potential, and risk. They also build cultures that reward impact rather than compliance.

In technology and AI, this distinction determines whether systems remain academic demonstrations or become reliable infrastructure.

Conclusion

Artificial evaluation and real performance observation are not interchangeable. They measure different things and serve different purposes. Artificial evaluation offers efficiency and comparability, while real observation provides depth and truth.

In any domain where trust, adaptability, and long term value matter, real performance observation must play a central role. Artificial evaluation can support the process, but it should never be mistaken for reality itself.

The future of credible assessment lies not in better tests alone, but in systems that can observe, learn from, and respond to real world performance over time.

Source : Medium.com