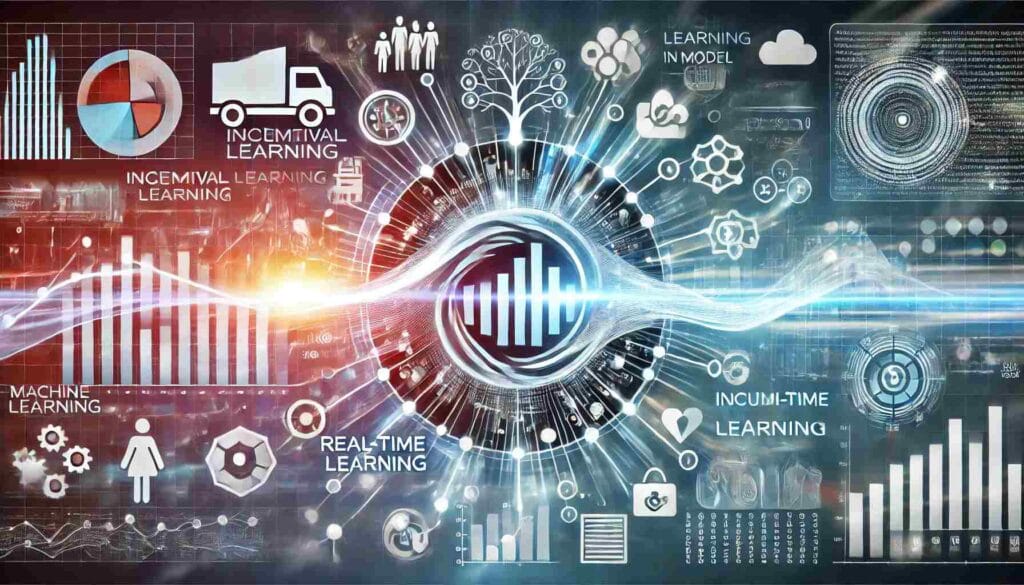

Incremental Learning: An Overview

Incremental learning, also known as online learning, refers to a machine learning paradigm in which the model is trained continuously as new data becomes available. Unlike traditional batch learning, where the model is trained on a fixed dataset all at once, incremental learning allows the model to process new data incrementally, making it highly efficient for real-time applications and scenarios with massive datasets.

Key Characteristics of Incremental Learning

- Data Availability: In incremental learning, data is not available in one large batch. Instead, data is processed in smaller chunks or continuously, which allows the model to adapt over time as new information is received.

- Memory Efficiency: Incremental learning models are designed to use minimal memory. Since the model does not need to store all past data, it can handle large datasets that do not fit into memory at once.

- Real-time Adaptability: One of the most important features of incremental learning is its ability to adapt to new data as it becomes available. This makes it ideal for applications that require real-time processing, such as stock market predictions, social media analysis, and sensor data processing.

- Continuous Training: The model is trained continuously rather than in one large training phase. This helps the model to learn from new data without the need to retrain from scratch.

- Dynamic Model Update: As new data is introduced, the model parameters are updated to reflect the changes. This ensures the model is always up-to-date with the latest trends and patterns.

Types of Incremental Learning

- Online Learning: Online learning is a form of incremental learning where data is fed into the model sequentially, one data point at a time. This approach is typically used when the model needs to learn continuously from data streams or in situations where the data is too large to be processed all at once.

- Instance-based Learning: This type of incremental learning focuses on storing and utilizing individual instances of data as new data points are received. Instead of building a global model, the system updates its decision-making process based on the closest stored instances.

- Model-based Learning: This approach involves adjusting the parameters of an existing model incrementally as new data is introduced. Techniques like stochastic gradient descent (SGD) are often used to make small adjustments to the model.

Advantages of Incremental Learning

- Scalability: Since data is processed in small increments, this approach is scalable for large datasets that cannot be processed in one go. It also allows for learning from data streams in real-time.

- Speed: Incremental learning allows the model to learn quickly by processing small batches of data rather than waiting for a full dataset to become available. This results in faster training times.

- Adaptability: The model can adapt to changes in the data over time, making it useful for dynamic environments where the data may change frequently, such as in stock prices, weather forecasts, or user behavior.

- Resource Efficiency: Since it doesn’t require storing all data in memory at once, incremental learning is more memory-efficient compared to traditional batch learning methods.

Applications of Incremental Learning

- Online Retail: Incremental learning can be used to predict consumer behavior, recommend products, and personalize user experiences in real time.

- Healthcare: In the medical field, incremental learning helps in predicting disease outcomes by continuously learning from new patient data.

- Autonomous Vehicles: Self-driving cars need to learn from the constantly changing environment around them. Incremental learning allows these systems to adapt quickly to new information such as road conditions and driver behavior.

- Natural Language Processing (NLP): In NLP applications like sentiment analysis and machine translation, incremental learning can help update models continuously as new language data (tweets, forum posts, etc.) comes in.

Challenges in Incremental Learning

- Catastrophic Forgetting: One of the main challenges in incremental learning is the issue of catastrophic forgetting, where the model forgets previously learned knowledge when it adapts to new data. Techniques such as regularization and memory replay are employed to mitigate this issue.

- Concept Drift: In dynamic environments, the underlying data distribution may change over time. This is known as concept drift, and handling it effectively is a major challenge in incremental learning.

- Quality of Data: Since incremental learning models continuously update themselves, the quality of incoming data becomes crucial. Poor-quality data can lead to inaccurate models and degraded performance.

Conclusion

Incremental learning represents a powerful method for continuously adapting machine learning models to evolving data, making it suitable for a wide range of real-time applications. With its ability to process data in small increments, adapt to changes over time, and operate efficiently in resource-constrained environments, incremental learning is a key approach in many domains. However, it also presents challenges such as catastrophic forgetting and concept drift, which need to be addressed for optimal performance.

As technology continues to evolve, incremental learning will likely play an increasingly important role in artificial intelligence, especially in environments where data is abundant and constantly changing.

Source : Medium.com