Human Trust in the Age of AI

How Do Humans Trust Each Other When Everything Can Be Generated by AI?

Introduction: The New Trust Problem

Artificial intelligence is changing the meaning of creation. Text, images, videos, music, code, resumes, business plans, product demos, and even personal messages can now be generated in seconds. What once required human effort, skill, time, and intention can now be produced by machines with increasing quality.

This creates a new question for society: when everything can be generated by AI, how do humans trust each other? In the past, we often trusted people based on what they showed us. A portfolio, a certificate, a written statement, a profile, or a professional reputation could act as signals of credibility. But in the AI era, signals can be easily copied, polished, or manufactured.

Trust will not disappear, but it will change. The future of trust will depend less on what people claim and more on what can be verified, observed, and proven through consistent action.

The Collapse of Surface-Level Credibility

For many years, people used surface-level signals to judge credibility. A well-written CV, a professional LinkedIn profile, a polished website, or a confident email could make someone appear trustworthy. These signals were never perfect, but they were useful because they required some level of effort.

AI weakens these signals. Anyone can now generate a professional bio, write expert-level posts, create realistic images, design a beautiful pitch deck, or produce convincing content in a short time. The barrier between real expertise and artificial presentation is becoming much thinner.

This does not mean everyone is dishonest. It means appearance alone is no longer enough. A person may sound skilled without being skilled. A company may look advanced without having real capability. A profile may appear impressive without representing actual experience.

As AI-generated content becomes normal, trust will move away from polished presentation and toward deeper forms of evidence.

From “Trust Me” to “Show Me”

The old trust model was often based on claims. People said they had experience, knowledge, or expertise, and others decided whether to believe them. In the age of AI, claims are too easy to generate.

The new trust model will be based on proof. Instead of asking, “What do you say you can do?” people will ask, “What have you actually done?” Instead of trusting a perfect description, they will look for evidence of real work, real contribution, real outcomes, and real human judgment.

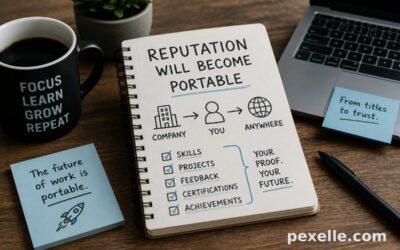

This shift is important in education, hiring, business, freelancing, investing, and online communities. Trust will increasingly depend on visible history: completed projects, verified skills, peer validation, public contributions, work samples, transaction records, and reputation built over time.

In simple terms, trust will become more evidence-based.

Human Effort Becomes More Valuable

When content becomes cheap, effort becomes valuable. If anyone can generate an article, image, or presentation instantly, then the real question becomes: what part of this work reflects human thinking, taste, responsibility, and decision-making?

AI can produce outputs, but it does not own the consequences. Humans still choose the direction, define the purpose, evaluate the quality, take responsibility, and make ethical decisions. That human layer will become one of the strongest foundations of trust.

People will trust those who can explain their reasoning, defend their choices, improve their work, respond to criticism, and show consistency over time. The final output may involve AI, but the human responsibility behind the output will matter more than ever.

In the future, saying “I used AI” may not reduce trust. But hiding AI use, exaggerating personal ability, or presenting generated work as deep original expertise may damage trust.

Verification Will Become a Core Social Infrastructure

The AI era will create demand for verification systems. These systems may include digital credentials, blockchain-based records, verified portfolios, identity checks, skill assessments, expert reviews, timestamps, audit trails, and reputation platforms.

The purpose of verification is not to remove trust. It is to support trust with evidence. For example, a person may claim they are skilled in software development. A verification system could show completed projects, peer reviews, code contributions, certificates, test results, and work history.

This creates a stronger trust layer than a simple CV or profile. It also helps reduce fake expertise, inflated claims, and AI-generated self-promotion.

In the future, professional identity may become less about titles and more about verified capability. People will not only ask, “What is your job title?” They will ask, “What can you prove you know, and who has validated it?”

Authenticity Will Become a Competitive Advantage

As AI-generated content floods the internet, authenticity will become more powerful. People will look for signs of real human presence: personal experience, original opinions, honest mistakes, direct communication, lived context, and emotional depth.

Perfect content may become less impressive because perfection can be automated. Human credibility may come from being specific, transparent, and consistent. A real story, a real process, or a real lesson learned may become more valuable than generic professional content.

This is especially important for creators, founders, educators, consultants, and experts. Audiences will not only judge the quality of content. They will judge whether the person behind it feels real, accountable, and trustworthy.

In an AI-heavy world, being human becomes a signal.

Reputation Will Be Built Through Consistency

Trust is not created in one moment. It is built through repeated behavior. AI can generate a convincing message once, but it cannot easily fake years of consistent contribution, collaboration, delivery, and accountability.

This is why reputation will become more important. People will trust those who have a track record. A strong reputation will come from repeated proof: delivering work, helping others, keeping promises, admitting mistakes, and improving over time.

In professional environments, this means trust will depend more on long-term evidence than short-term presentation. In online communities, it means people with consistent history will have more credibility than accounts with polished but shallow content.

AI may help people create faster, but trust will still require time.

The Role of Human Validation

AI can evaluate, score, summarize, and recommend, but human validation will remain essential. In many areas, especially hiring, education, healthcare, law, finance, and leadership, trust requires human judgment.

Human validation adds context. A human expert can understand nuance, intention, ethics, originality, and real-world impact. AI may detect patterns, but humans can judge meaning.

The strongest systems will likely be hybrid systems: AI helps with scale and analysis, while humans provide judgment, responsibility, and final validation. This combination can make trust faster, fairer, and more reliable, but only if designed carefully.

Trust should not be fully outsourced to machines. AI can support trust, but humans must remain accountable for trust decisions.

Transparency Will Matter More Than Perfection

In the AI age, transparency will become a key part of credibility. People will want to know whether something was AI-assisted, human-created, edited, verified, or reviewed.

This does not mean every use of AI must be treated as suspicious. AI is a tool, like a calculator, camera, or design software. The problem is not AI use itself. The problem is deception.

A transparent person or organization can say: AI helped with drafting, but the ideas, review, decisions, and responsibility are human. This kind of honesty can increase trust instead of reducing it.

The future will reward people who are clear about how work was created, what was verified, and where responsibility belongs.

The Risk of Deepfakes and Synthetic Identity

One of the biggest dangers of AI is the rise of synthetic identity. Deepfake videos, cloned voices, fake profiles, generated documents, and automated social accounts can make it difficult to know whether we are interacting with a real person.

This will create serious challenges for business, politics, education, and personal relationships. Fraud may become more convincing. Misinformation may spread faster. Fake authority may look real.

To respond to this, society will need stronger identity verification, media provenance, secure communication channels, and better public awareness. People may become more cautious about trusting screenshots, videos, voice messages, or online profiles without confirmation.

In the AI era, seeing and hearing will no longer always mean believing.

Trust Will Become More Context-Based

Not every situation requires the same level of trust. A casual AI-generated social post is not the same as a medical diagnosis, legal contract, financial report, or professional certification.

The future of trust will depend on context. Low-risk content may only need basic transparency. High-risk decisions will need stronger verification, human review, audit trails, and accountability.

For example, using AI to write a birthday message is very different from using AI to generate a legal document or evaluate a job candidate. The higher the consequence, the stronger the trust system must be.

This means trust will become layered. Different levels of evidence will be required for different levels of risk.

Education Must Teach Verification, Not Just Information

In the past, education focused heavily on accessing and memorizing information. But AI makes information easy to produce. The harder skill is knowing what to trust.

Future education must teach verification, critical thinking, source evaluation, ethical AI use, and evidence-based reasoning. Students and professionals will need to ask: Where did this come from? Is it accurate? Who is responsible? What evidence supports it? What might be missing?

The ability to verify information will become one of the most important human skills. In a world full of generated content, judgment becomes more valuable than access.

People who can separate signal from noise will have a major advantage.

Businesses Will Need Trust-by-Design

Companies will need to design trust into their products, services, and communication. This means being clear about AI use, protecting user data, verifying important outputs, and creating transparent processes.

For AI products, trust will depend on privacy, explainability, security, and accountability. Users will ask: Where is my data going? Is this output reliable? Can I verify it? Who is responsible if it is wrong?

Companies that ignore these questions may lose credibility. Companies that build trust from the beginning may gain a strong competitive advantage.

In the AI economy, trust will not be only a moral value. It will be a business asset.

The Future of Human Trust

The age of AI will not destroy human trust. It will force trust to become more mature. We will move from trusting appearances to trusting evidence. From trusting claims to trusting verified capability. From trusting polished content to trusting consistent behavior.

AI will make creation easier, but it will also make authenticity harder to prove. This means the most trusted people and organizations will be those who combine technology with transparency, skill with evidence, and intelligence with responsibility.

The future of trust will not be based on whether something was created by AI or by a human. It will be based on whether the person behind it can prove integrity, competence, and accountability.

Conclusion: Trust Becomes a Human Responsibility

When everything can be generated, the value of being real increases. Human trust in the age of AI will depend on proof, transparency, reputation, verification, and responsibility.

AI can help us create, analyze, and communicate. But trust is still a human responsibility. Machines can generate outputs, but humans must provide meaning, ethics, context, and accountability.

The future will belong to people and organizations that understand this simple truth: in a world of infinite artificial content, trust will be built by real evidence, real behavior, and real human responsibility.

Source : Medium.com