AI Can Generate Portfolios. But Can It Generate Trust?

Introduction: The New Problem of Digital Proof

Artificial intelligence has changed the way people present themselves online. A designer can generate mockups in minutes. A developer can produce sample code with AI assistance. A marketer can create campaign examples, case studies, and polished reports without ever working with a real client. This does not mean all AI-generated work is fake or useless. In many cases, AI helps people express their skills faster and better.

But it creates a serious question for the future of work: if anyone can generate a beautiful portfolio, how do we know who actually has the ability behind it?

For many years, portfolios were used as proof. They showed what someone had built, designed, written, analyzed, or delivered. A portfolio helped employers, clients, and collaborators understand a person’s capability. Today, that signal is becoming weaker. AI can generate the appearance of competence, but appearance is not the same as trust.

The Portfolio Was Once a Strong Signal

Before the rise of generative AI, creating a good portfolio required real effort. A designer had to produce original visual work. A developer had to build functional projects. A writer had to demonstrate voice, structure, and clarity. A data analyst had to show dashboards, insights, or case studies.

The portfolio was not perfect, but it carried weight because it was difficult to fake at scale. Even if someone exaggerated their role, the work still required time, tools, and some level of knowledge.

This made portfolios valuable in hiring, freelancing, education, and professional networking. They gave people a way to prove themselves beyond a CV, degree, or job title.

AI Has Lowered the Cost of Looking Skilled

Generative AI has changed the economics of presentation. A person can now create a polished landing page, write project descriptions, generate UI screens, produce code snippets, and prepare professional-looking case studies with very little direct experience.

This creates a new gap between presentation and proof.

Someone may have a portfolio that looks impressive but does not reflect their actual decision-making, problem-solving, or execution ability. The work may be visually strong, but the person may not understand why certain choices were made. They may not be able to explain constraints, trade-offs, mistakes, or outcomes.

In other words, AI can help generate output, but it cannot automatically generate ownership.

The Difference Between Output and Competence

A portfolio shows output. Trust requires evidence of competence.

Output answers the question: “What does this look like?”

Competence answers deeper questions:

- Can this person solve real problems?

- Can they make decisions under constraints?

- Can they explain their process?

- Can they improve after feedback?

- Can they work with others?

- Can they deliver in a real environment?

AI can produce impressive artifacts, but real competence appears in context. It appears when someone must understand a problem, choose a direction, justify decisions, handle limitations, communicate clearly, and produce results that matter.

A generated portfolio may show the final image. Trust comes from seeing the thinking, process, evidence, and impact behind the image.

Why Trust Is Harder to Generate Than Content

Trust is not created by appearance alone. It is built through repeated signals that show reliability, honesty, capability, and accountability.

AI can generate a project description, but it cannot truthfully generate the experience of working with a real client. It can write a case study, but it cannot replace actual responsibility. It can produce code, but it cannot prove the person understands architecture, debugging, security, maintainability, or user needs.

Trust depends on verifiable context.

- Who created the work?

- When was it created?

- What problem was being solved?

- What role did the person play?

- What tools were used?

- Was there feedback?

- Was the work reviewed?

- Did it create measurable value?

Without these answers, a portfolio becomes a visual claim rather than reliable evidence.

The Rise of Synthetic Credibility

One of the biggest risks of AI-generated portfolios is synthetic credibility. This happens when someone appears more experienced, skilled, or successful than they really are because AI helps them create professional-looking proof.

This does not only affect dishonest people. It affects the entire trust system.

When too many portfolios look polished, employers and clients become skeptical of everyone. Real beginners may look similar to experienced professionals. Real experts may struggle to stand out because their evidence is mixed with AI-generated noise.

The result is a trust crisis in digital hiring and professional identity.

When everything can be generated, verification becomes more important than presentation.

AI Does Not Eliminate Skill, It Changes How Skill Must Be Proven

It would be wrong to say that AI makes portfolios meaningless. AI is now part of modern work. A strong professional may use AI to speed up design, coding, writing, research, or analysis. The issue is not whether AI was used. The issue is whether the person can demonstrate real understanding and responsibility.

In the future, the best professionals will not be those who avoid AI completely. They will be those who can use AI intelligently while still showing human judgment.

This means portfolios must evolve. They should not only show final outputs. They should show process, decisions, constraints, collaboration, validation, and results.

A modern portfolio should answer not only “What did you create?” but also “How did you think?”

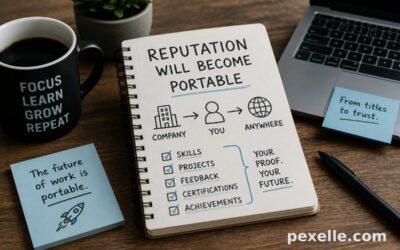

The New Portfolio: From Showcase to Evidence System

The traditional portfolio is a showcase. The future portfolio should be an evidence system.

Instead of only displaying finished work, a trustworthy portfolio should include multiple layers of proof:

- Project context

- Problem definition

- Role and responsibility

- Process steps

- Tools used, including AI tools

- Feedback received

- Iterations and improvements

- Real-world constraints

- Results or measurable impact

- Peer, mentor, or client validation

This makes the portfolio harder to fake and more useful to evaluate.

A beautiful UI screen is interesting. But a screen combined with user research, design decisions, iteration history, implementation notes, and feedback becomes much stronger evidence.

Process Will Become More Valuable Than Polish

In an AI-generated world, polish is no longer rare. Many people can generate polished outputs quickly. What becomes rare is authentic process.

Employers and clients will increasingly ask:

- Why did you make this decision?

- What alternatives did you consider?

- What failed?

- What did you change after feedback?

- What would you improve next time?

- What part did AI help with?

- What part required your own judgment?

These questions reveal whether the person owns the work or only owns the final file.

The more AI improves, the more human process becomes a trust signal.

Verification Will Become a Competitive Advantage

The future of portfolios will likely move toward verified skill evidence. This could include authenticated project history, peer reviews, employer confirmations, skill assessments, recorded explanations, version history, certificates, badges, and real-world performance data.

For example, a developer’s trust signal may come from Git commit history, code reviews, issue resolution, production contributions, and technical explanations.

A designer’s trust signal may come from design iterations, user testing notes, stakeholder feedback, and implementation outcomes.

A marketer’s trust signal may come from campaign performance, audience data, A/B testing, and business results.

A learner’s trust signal may come from completed tasks, mentor reviews, practical challenges, and evidence-based badges.

The key shift is simple: claims must become verifiable.

The Human Layer of Trust

Trust is not only technical. It is also human.

People trust professionals who are transparent about their abilities, honest about their limitations, and clear about their contributions. In the age of AI, honesty becomes even more important.

A person who says, “I used AI to generate the first draft, then I refined the logic, tested the result, and adjusted it based on feedback,” may be more trustworthy than someone who hides AI usage completely.

Transparency does not weaken trust. In many cases, it strengthens it.

The problem is not AI assistance. The problem is false ownership.

Employers Must Change How They Evaluate Talent

Organizations should not rely only on polished portfolios anymore. They need better evaluation methods.

Instead of asking candidates to submit only final samples, employers can ask for short explanations, process breakdowns, live discussions, practical tasks, or evidence of real contribution.

They should focus on judgment, problem-solving, communication, and adaptability.

A candidate may use AI, but they should be able to explain the result, defend decisions, identify weaknesses, and improve the work. This is especially important in roles where quality, security, ethics, or business impact matter.

The future hiring question is not “Did you use AI?”

The better question is: “Can you prove you understand what you delivered?”

Creators Must Build Trust Intentionally

Professionals, freelancers, and students also need to adapt. A portfolio should no longer be treated as a gallery of perfect results. It should become a story of learning, execution, and evidence.

Creators should document their process. They should show drafts, decisions, feedback, revisions, and outcomes. They should explain where AI helped and where their own expertise mattered.

This makes the portfolio more credible and more human.

In a world full of generated content, authenticity becomes a powerful advantage.

AI Can Support Trust, But It Cannot Replace It

AI can help organize evidence, summarize project history, explain work clearly, and create better documentation. It can help people present their skills in a more structured way.

But AI cannot replace the real foundation of trust.

Trust still requires truth. It requires responsibility. It requires proof. It requires consistency between what someone claims and what they can actually do.

AI can generate content. It can generate designs. It can generate code. It can generate stories.

But trust must be earned.

Conclusion: The Future Belongs to Verifiable Talent

AI has made it easier than ever to create a portfolio. That is both exciting and dangerous. It gives more people access to professional presentation, but it also weakens old signals of credibility.

The future will not belong to people with the most polished portfolios. It will belong to people who can prove their skills with evidence.

Portfolios will not disappear. They will evolve.

The next generation of portfolios will be more transparent, more contextual, and more verifiable. They will show not only what someone created, but how they created it, why they made decisions, what impact it had, and who can validate the work.

AI can generate a portfolio.

But trust requires evidence.

Source : Medium.com